2026-03-13

Where Your Eyes Actually Read

v2.4.0 release notes →

Fixate on the period at the end of this sentence. Without moving your eyes, notice how much of the line you can still read — a few characters left, several words right. Your effective reading window isn’t a circle. It’s a lopsided blob, stretched in the direction you’re about to read.

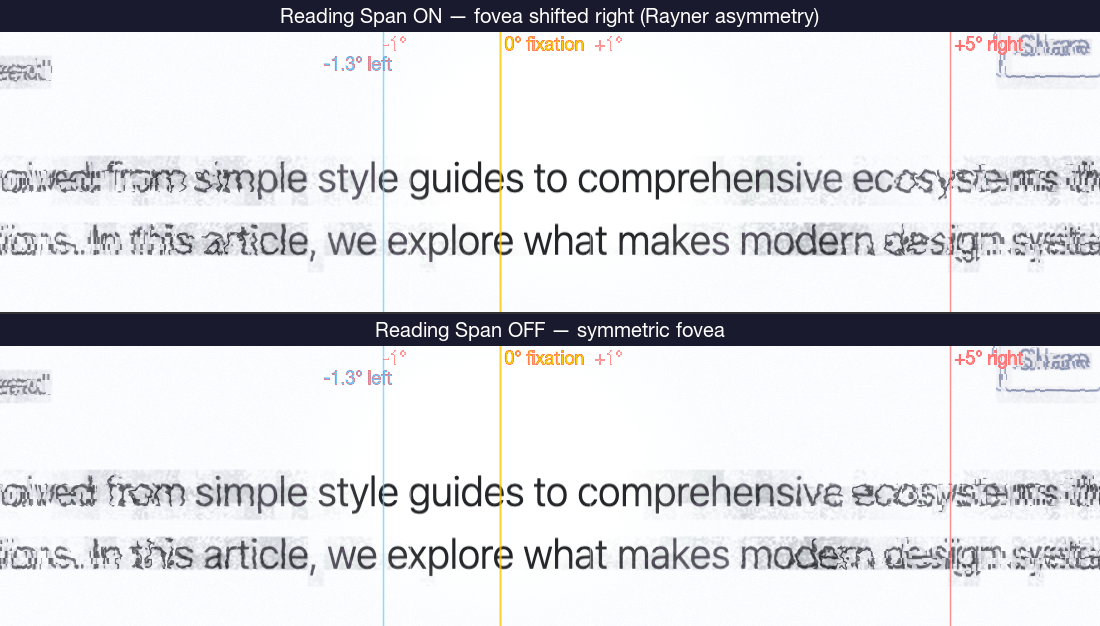

Keith Rayner measured this across decades of eye-tracking: the perceptual span during English reading extends ~1.3° left of fixation and ~5° right. It flips direction for Hebrew and Arabic readers — it’s attentional, not an acuity limit. Your visual system allocates preview resources where the next saccade will land.

Scrutinizer already tracked cursor velocity for saccadic blindness and already had the real-time DOM structure map exposing text regions. Building on both, the reading span envelope took ~80 lines across the gaze model and shader — the test harness was twice that.

The Reading Span Envelope

When horizontal cursor movement crosses text content, the foveal protection zone shifts in the reading direction. The shift is gated on three signals:

- Horizontal velocity — pursuit speed, not saccadic. The gate activates during smooth tracking (0.05–2.5 px/ms) and suppresses during fast jumps.

- Directionality — the shift tracks the sign of horizontal velocity, so it handles LTR and RTL automatically.

- Text content — the structure map’s text channel gates the effect. Reading span only activates over text, not images or whitespace.

The shift magnitude is 70% of the foveal radius, producing roughly the 4:1 asymmetry Rayner documented. Over non-text content, or during vertical scrolling, the fovea stays circular.

Without this, a correctly-sized fovea makes reading under Scrutinizer harder than real peripheral vision warrants. The parafoveal preview region — where the visual system pre-processes the next word before a saccade lands — falls inside the degradation zone. The fovea-parafovea boundary is a gradient, not an edge, and reading is the task where that gradient matters most. Modeling the asymmetric span is modeling the system, not accommodating the user.

The reading span is on by default (View → Reading Span) and can be disabled for experiments that require a strict circular fovea.

This release also corrects the foveal radius — previous versions used 2° as a radius rather than the anatomically correct 1° (2° diameter), doubling the high-acuity zone. The fix halved the default from 90px to 45px.

Getting the Fovea Right

— a calibration post-mortem

The same review surfaced a calibration error that had been hiding in plain sight.

The fovea subtends about 2° of visual angle — diameter. Scrutinizer was using 2° as the radius, giving twice the anatomically correct high-acuity zone. At 90px with a 45 px/° calibration, the clear zone reached 2° from fixation, covering territory that should already show peripheral degradation.

A reviewer noticed because the demo “looked too sharp.” The error was internally consistent — the calibration doc stated “diameter (~2°)” on one line, then used 2° as a radius in the formula two lines later. Every downstream computation consumed the same wrong constant. The visual output was plausible because the entire eccentricity axis was stretched uniformly.

The Fix

Two values, changed together to preserve pixels-per-degree:

fovea_deg: 2.0 → 1.0 (the anatomical correction)fovealRadiusdefault: 90px → 45px

With ppd = fovealRadius / fovea_deg, both ratios equal 45. All

eccentricity-dependent models — chromatic decay, spatial frequency cutoffs, crowding

thresholds — produce identical output at identical pixel distances. The only behavioral

change: the clear zone shrinks from 90px to 45px radius. Content at 60px from fixation,

previously treated as foveal, now shows parafoveal degradation.

Existing users’ stored radius is halved automatically on first launch.

Why 47 Validation Checks Didn’t Catch It

Scrutinizer has a multi-tier validation pipeline: 47 checks across 7 waves, comparing shader output against published psychophysical data. An adversarial audit classified every check as ordinal (rank order, monotonicity, correlation) or absolute (specific numeric value at a specific eccentricity). 51% are absolute. On paper, 26 checks should fail under a 2x eccentricity scaling.

They didn’t. Three failure modes compounded:

Self-referential checks. Wave 2 validates M-scaling cutoffs by comparing

the shader’s output against predictions from a script that consumed the same wrong

fovea_deg = 2.0. The check verified internal consistency, not correctness.

Tolerance absorption. Checks with ±30% tolerance absorb up to a 1.3x systematic error. Exponential decay curves are shallow at moderate eccentricities (3–6°), so a 2x error stayed within bounds.

Calibration/validation leak. Using the same published dataset to both calibrate parameters and validate output makes the validation circular.

The ordinal checks were fine — they’re doing what they should. The failure was in absolute checks that looked rigorous but weren’t independent of the bug.

The Validation Adversary

We’re adding a formal adversarial audit to the validation workflow. For each wave, the adversary asks:

- Does the “expected value” come from the same codebase, or from independent published data?

- What’s the maximum systematic error each tolerance absorbs?

- What’s the simplest transformation (scaling, offset, parameter swap) that passes all tiers?

- Which eccentricity × domain combinations have no absolute anchor?

A validation suite that agrees with itself is not the same as one that agrees with reality. Separate your calibration data from your validation data. And when a domain expert offers to review your demo, say yes — that’s the calibration check you can’t automate.

Literature: Rayner 1998 · Rayner 2009 · Blauch, Alvarez & Konkle 2026 · Rovamo & Virsu 1979 · Bowers, Gegenfurtner & Goettker 2025

Source: peripheral.frag · gaze-model.js · capture-reading-span.js