2026-03-21

Isotropic Cortical Sampling

v2.6.0 release notes →

In 2006, Matt Queen asked a question in Boxes & Arrows that stuck: icon designers work at the pixel level, but users encounter icons at the periphery of their attention, where spatial resolution has already collapsed. What would designers do differently if they could see their work the way the visual system actually processes it?

Scrutinizer is one answer to that question. It simulates peripheral vision in real time, degrading a live browser frame based on gaze position. The degradation isn’t uniform blur — it’s a pipeline modeled on the visual system: spatial frequency decomposition (12 bands of Difference-of-Gaussians filtering, each tuned to a different detail level), differential chromatic decay (color sensitivity drops with eccentricity, red-green channels faster than blue-yellow), and feature displacement that produces crowding without fog.

v2.6 derives the displacement geometry from the cortical magnification function — the mapping between how far something is from where you’re looking and how much cortical tissue is devoted to processing it. Where the pipeline’s noise frequencies and scramble cell sizes were previously fixed constants, they now scale with cortical sector extent as defined by Blauch, Alvarez & Konkle (2026).

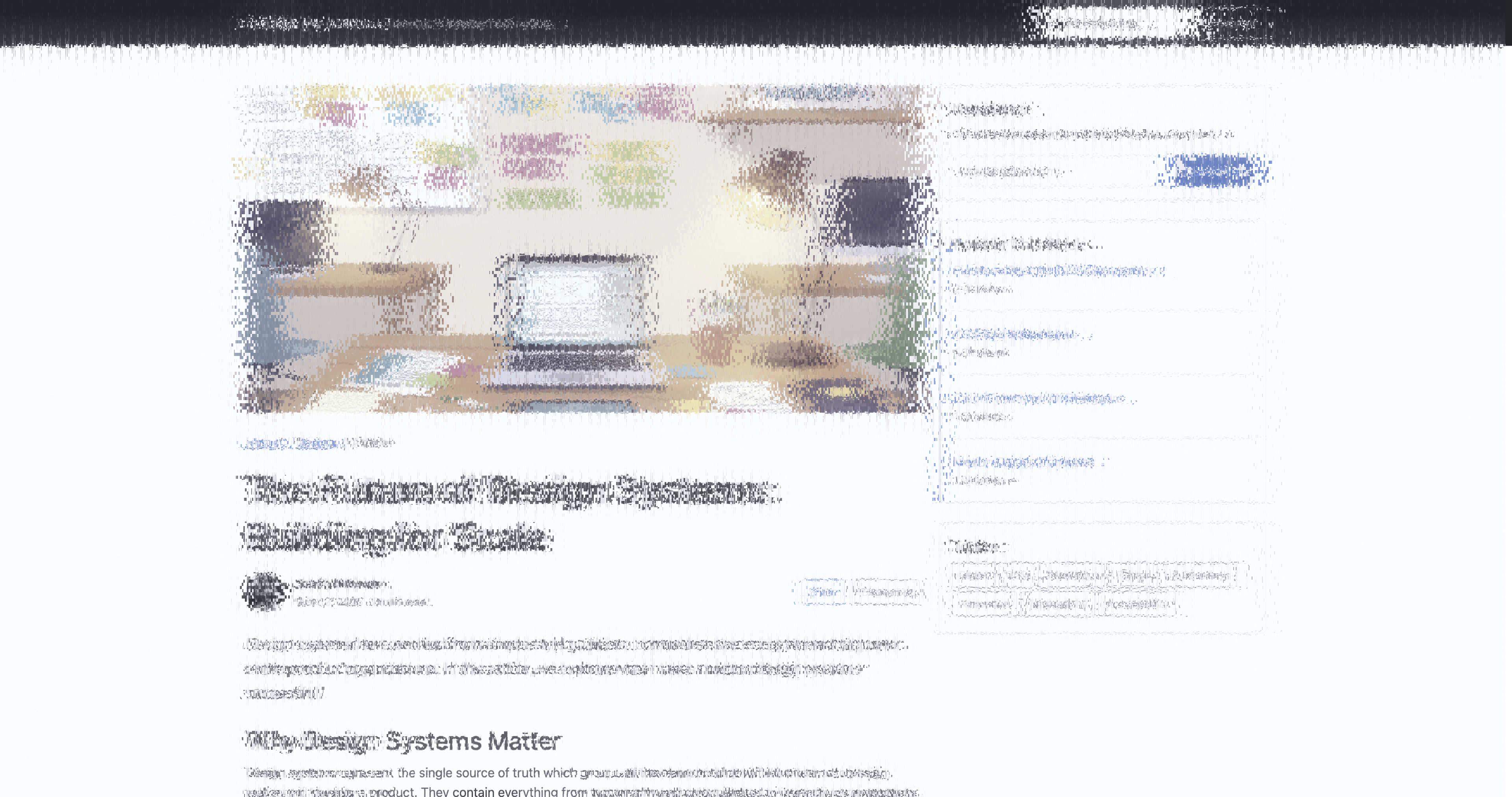

Original

Original

Through Scrutinizer

Through Scrutinizer

The cortical grid

Your brain dedicates most of its visual processing power to the center of gaze and

progressively less to the periphery. The falloff is logarithmic —

w = log(r + a) — and it sets the spatial scale at

which peripheral features get pooled together.

The GPU’s built-in texture sampling uses a rectangular grid — uniform square tiles across the whole image. That doesn’t match how resolution actually falls off. The isotropic cortical grid grows cell size with eccentricity and adjusts the number of angular slices at each ring to keep cells roughly square, matching how neurons actually tile the visual field. Resolution degrades equally in all directions, not faster in one axis than another.

Rectangular MIP grid (left) vs. isotropic cortical grid (right). View on CodePen

What the grid drives

Scrutinizer’s MIP level selection already used log(1 + r/a) —

the same logarithmic curve. What v2.6 adds is a second use of the same geometry:

parameterizing displacement. Two mechanisms degrade peripheral content — a noise

warp (“Bender”) that shifts features and a discrete scramble (“Cutter”)

that breaks within-feature coherence. Both now scale with the local cortical sector extent:

Fixed parameters

Fixed parameters

Sector-parameterized

Sector-parameterized

Same page, same gaze. These look similar — that’s the point. 38% of pixels differ, but the visual character is the same: text destroys, layout survives. The change is in the spatial profile (4-8% lower peripheral variance, smoother degradation gradient), not dramatic visual difference. This is a derivation upgrade, not a mechanism upgrade.

Two mechanisms work together to break letter identity in the periphery. Neither alone is enough — smooth warping preserves structure, and cell scrambling alone leaves fragments recognizable. Combined, they destroy identity while preserving texture:

The displacement pipeline in isolation and in sequence. The Bender shifts features smoothly; the Cutter breaks them into displaced cells. Neither alone destroys letter identity — together they do, while preserving texture and layout.

- Bender (noise warp) — we scale frequency inversely with sector extent. Near the fovea (~7px sectors), the warp is fine-grained. At 15° (~52px sectors), warp wavelength grows to feature scale. Features shift position smoothly, like looking through textured glass.

- Cutter (discrete scramble) — we divide the image into small cells and displace each by a random offset. Cell size tracks sector extent (floored at 8px, capped at 16px). Throw distance — how far each cell moves from its original position — is bounded by one sector width, with 2:1 radial bias (crowding is stronger along the fovea-to-periphery axis than tangentially; Toet & Levi 1992). Features break apart at sub-letter scale.

In practice, the delta is quiet — mostly a reduction in implausible long-range scatters — but it aligns the MIP chain and displacement pipeline under a single cortical geometry.

Working with the hardware

When a texture covers fewer screen pixels — because it’s far away, at a

steep angle, or warped — sampling its full detail would create shimmer and

noise. The GPU avoids this by choosing a pre-blurred version (MIP level) that

matches how densely you’re actually sampling. It measures this with

dFdx: the rate of change of the texture coordinate between neighboring

screen pixels. Fast-changing coordinates mean you’re skipping texels, so the

hardware picks a blurrier level. Slow-changing means you’re sampling densely,

so it picks a sharp one.

We’re not computing blur — we’re lying to the GPU about how fast the texture is moving.

When V1 displacement warps the sampling UV, the original derivatives become wrong:

they describe the undistorted coordinate field, not the warped one. Passing

dFdx(v1.distortedUV) — the derivative of the warped

coordinate — makes the hardware think the texture is changing faster than it

actually is in the periphery, which triggers higher (blurrier) MIP levels automatically.

Eccentricity-scaled blur, computed by the texture sampling unit, not by us.

The tiles are still square — that’s the hardware. But the level selection now tracks the cortical warp. Scrutinizer’s 12-band DoG decomposition turns the MIP chain’s blunt power-of-2 steps into graded frequency loss: serifs drop while panel layout survives. (Details in the MIP chain tech brief.)

The real-time constraint forces specific tradeoffs: displacement instead of summary-statistic pooling, hardware MIP instead of continuous receptive fields, box-filtered frequency bands instead of Gaussian convolution. The spec documents these approximations and the implementation journal records the 8 rendering approaches attempted.

The next step: pooling, not displacement

The isotropic grid gives the correct pooling regions. What remains is implementing the correct pooling computation within them.

Pixels move — but keep their identity. A displaced letter is still a letter, just in the wrong place.

Features merge — individual identities are replaced by summary statistics. A pooled letter becomes texture. (Rosenholtz 2016)

The gap is architectural. Pooling-based synthesis needs a WebGPU compute pipeline — pyramid decomposition, cross-scale statistics, multi-band texture matching — within a 3-4ms real-time budget. We’re building that now, with the isotropic sectors providing the pooling geometry and Brown et al.’s metamer implementation as a reference for calibrating information loss.

Literature: Blauch, Alvarez & Konkle 2026 · Rovamo & Virsu 1979 · Schwartz 1980 · Rosenholtz 2016 · Toet & Levi 1992 · Schyns & Oliva 1994 · Loftus & Harley 2005

Demos: Grid comparison (CodePen) · Cortical manifold

Update (March 2026): The sector pooling geometry described above is now implemented in the WebGPU compute pipeline, with eccentricity-scaled sectors validated against Blauch’s reference. Brown metamer comparison in progress.