2026-03-08

Measuring the Pipeline

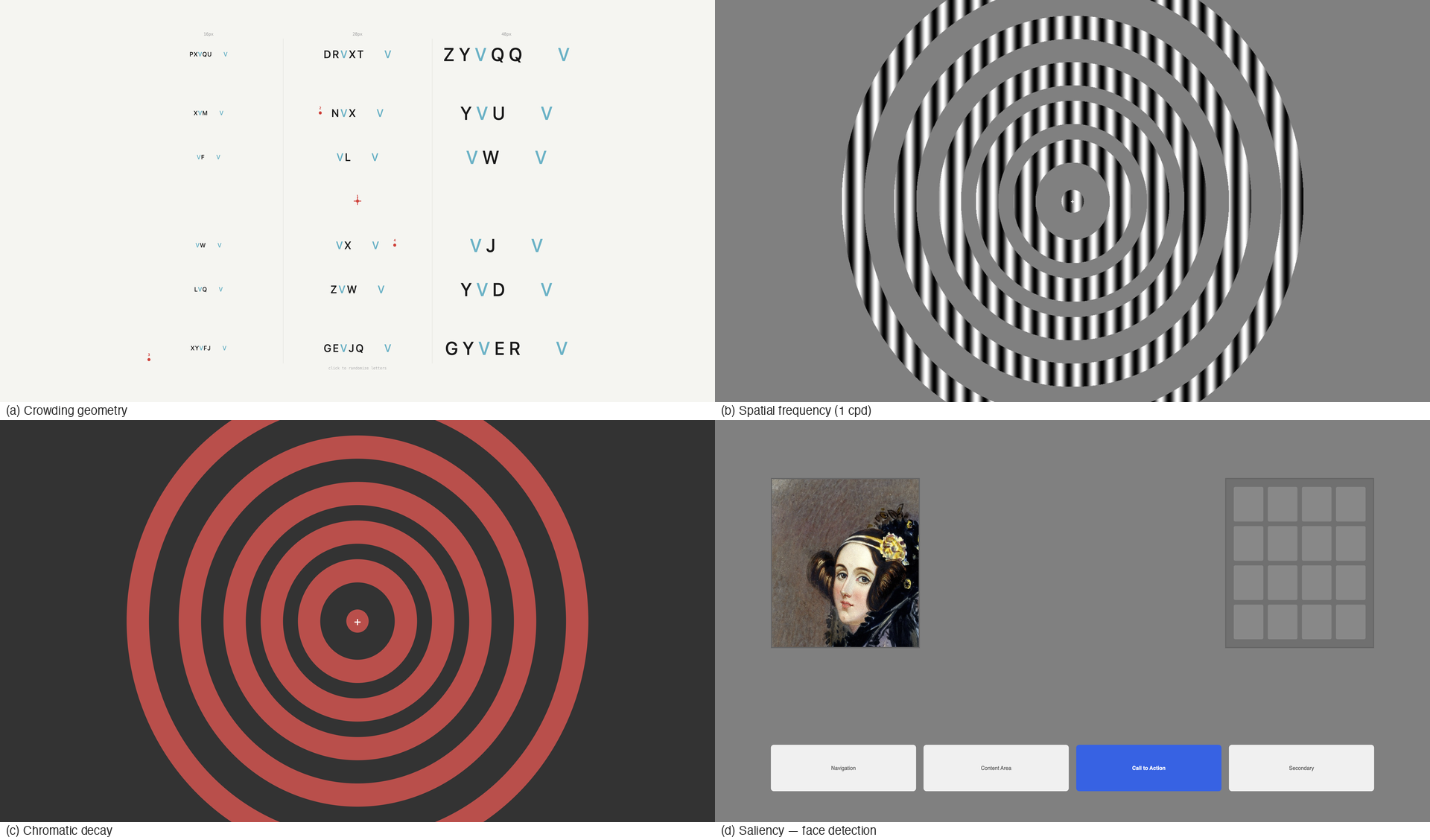

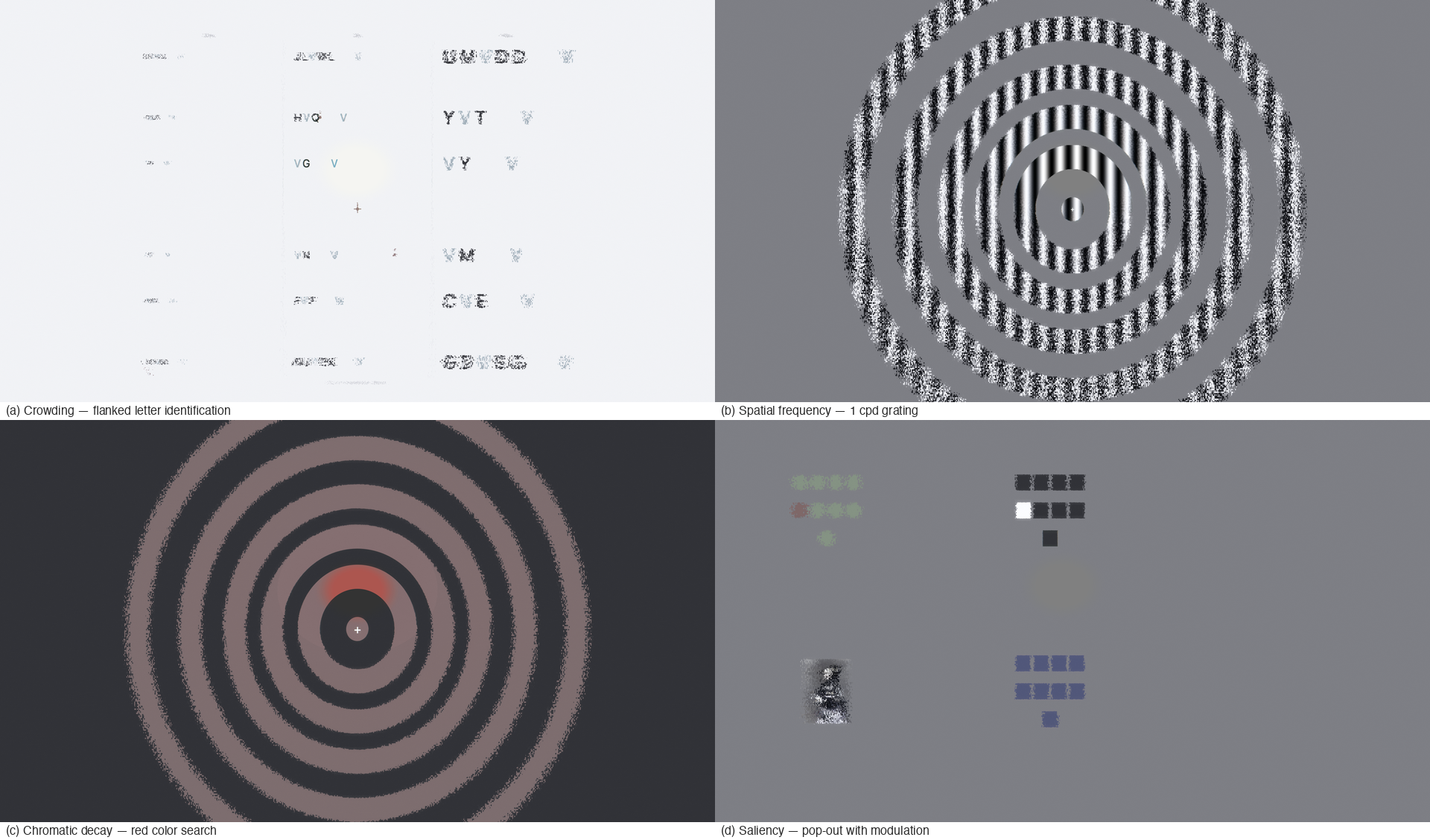

v2.1.0 release notes →V2.0 made the pipeline visible. v2.1 asks whether it’s correct. We rebuilt five classic human vision experiments as automated reference pages, ran the shader against each one, and compared pixel measurements to the published data. The whole battery — stimulus pages, capture scripts, analysis, golden captures — ran in a single day. Three shader bugs fell out that prior testing had not caught.

- Chromatic decay — Mullen 2002, Hansen 2009, Bowers 2025

- Spatial frequency — Rovamo & Virsu 1979

- Crowding geometry — Bouma 1970, Toet & Levi 1992

- Saliency protection — Itti & Koch 2001, Hershler 2005

- Mixed-density search — Halverson & Hornof 2011

Also in this release: 8 half-octave DoG bands replace the old 4-band decomposition. Smoother blur gradient, twice as many frequency transitions, same API.

Five waves of validation

Each wave targets a different pipeline stage. The pattern is the same throughout: render a known stimulus through the shader, measure output pixels, compare against published human data.

| Wave | Domain | Published basis | Key finding |

|---|---|---|---|

| 1 | Chromatic decay | Mullen 2002, Hansen 2009, Bowers 2025 | Green tracks the RG curve, not BY — hue-based models get this wrong |

| 2 | Spatial frequency | Rovamo & Virsu 1979 | DoG produces step functions at MIP boundaries vs smooth CSF |

| 3 | Crowding geometry | Bouma 1970, Toet & Levi 1992 | R:T bug found and fixed; density gate validated at 3.3:1 ratio |

| 4 | Saliency protection | Itti & Koch 2001, Hershler 2005 | Face saliency 4.79× control; protection ratio 0.283 |

| 5 | Mixed-density search | Halverson & Hornof 2011 | Density gate validates at macro level; word-level granularity gap found |

The full validation infrastructure ships with the repository: 5 capture scripts, 5 analysis scripts, 15 reference pages, 25+ golden captures, and machine-readable JSON of published data spanning 45 years of vision science (Rovamo 1979, Mullen 2002, Hansen 2009, Bowers 2025). Every claim in the arxiv paper can be reproduced by running the scripts.

What the numbers say

Each wave is graded at three tiers: must-pass (correct qualitative behavior), should-pass (quantitative agreement), stretch (publication-quality correlation with human data).

Wave 1 (chromatic decay): 5/5 Tier 1, 3/3 Tier 3. Blue retention at ring 5 is 1.70× red, matching the Mullen & Kingdom (2002) RG/BY asymmetry. Model retention correlates $r = 1.000$ with Hansen et al.’s (2009) color naming data. The green result is the interesting one: 51.9% retention, closer to red (43.1%) than blue (73.5%). In Oklab, green’s chroma splits ~57% RG / ~43% BY. As the RG channel collapses peripherally, green shifts toward yellow-green. Hue-based models miss this; opponent-channel models get it for free.

Wave 2 (spatial frequency): 11/11 Tier 1, 5/5 Tier 2, 0/1 Tier 3. Frequency ordering is correct everywhere, M-scaling cutoffs match exactly, and the residual band (layout blocks) survives at 100% across all rings. But Tier 3 fails: per-band Spearman correlations with Rovamo & Virsu (1979) range from $r = -1.0$ to $r = 0.6$ because each DoG band transitions 100%→0% in a single step. The composite metric is $r = 0.600$. The 8-band upgrade narrows each step but doesn’t eliminate the underlying discretization. A matched Gaussian blur comparison confirmed the root cause: DoG and Gaussian produce near-identical contrast retention slopes on achromatic gratings (ring 4: both $-0.4675$; ring 5: $-0.3592$ vs $-0.3601$). Both pipelines sample from the same MIP chain, so the band decomposition doesn’t add frequency selectivity beyond what the MIP pyramid already provides. The differentiation is architectural: saliency gating, chromatic channel separation, and density-gated crowding are pipeline stages that Gaussian blur lacks entirely — and the saliency comparison shows 5–10× better content preservation.

Three bugs found by measurement

- Polar sector R:T ratio — spoke count computed from biased ring width, producing ~1:1 instead of the 2:1 Toet & Levi measured. Fixed.

- V1 far-peripheral growth — displacement plateaued beyond the parafovea. Growth factor tuned from 0.5 to 1.5.

- Rovamo composite metric — per-band correlations were meaningless for step functions. Replaced with frequency-weighted composite.

The Halverson gap

Halverson & Hornof (2011) found peripheral encoding accuracy drops from 90% to 50% when word spacing falls below 0.15°. Scrutinizer’s density gate makes the same structural prediction — distortion strength scales with density via sigmoid — but the structure map reads DOM blocks, not words. A sparse group (5 words, 0.66° spacing) and a dense group (10 words, 0.33°) produce nearly identical density values. SSIM difference: 0.3% at 90px foveal radius, 0.1% at 60px. The gate validates at the macro level (sidebar vs hero image) but not the micro level (word spacing within a block). Congestion scoring (Rosenholtz Feature Congestion) provides a complementary signal that operates on pixel-level texture statistics rather than DOM structure, partially bridging this granularity gap.

Known limits

- Stepped blur, not smooth. 8 bands are better than 4, but still discrete steps where human vision has continuous falloff.

- Crowding strength, not spacing. The density gate scales distortion intensity but not the critical spacing at which crowding engages. The shader doesn’t know what’s adjacent to a given pixel.

- No stimulus-specific crowding. Same-orientation flankers should crowd harder than orthogonal ones (Pelli & Tillman 2008). The renderer has no concept of target-flanker similarity.

Update: v2.2’s oriented DoG bands partially address the third limitation. Cardinal-aligned edges (horizontal, vertical) now receive ~50% further M-scaling cutoffs than oblique edges, and radial edges fade faster than tangential ones. This isn’t full stimulus-specific crowding (flanker similarity is still not computed), but it means orientation now modulates degradation strength.

8 half-octave DoG bands

The previous version decomposed the image into 4 frequency bands — fine detail, medium detail, coarse structure, layout. The blur transition from sharp to pooled had 4 steps, which meant the parafoveal region (where text goes from readable to unreadable) had only 1–2 active bands at any point. Coarse.

v2.1 doubles the resolution to 8 bands at half-octave spacing. The blur gradient is now twice as smooth, with the biggest improvement in the critical parafoveal zone. The old 4 bands are still in there (bands 1, 3, 5, 7 below) — the new bands interleave between them.

| Band | Freq (cpd) | Content | New? |

|---|---|---|---|

| 0 | 5.66 | Serifs, fine detail | New |

| 1 | 4.0 | Thin strokes | = old band 0 |

| 2 | 2.83 | Letter bodies | New |

| 3 | 2.0 | Small icons | = old band 1 |

| 4 | 1.41 | Words, UI labels | New |

| 5 | 1.0 | Word groups | = old band 2 |

| 6 | 0.71 | Buttons, panels | New |

| 7 | 0.5 | Layout blocks | = old band 3 |

Performance cost is negligible — under 1ms at 1080p. See the MIP chain explainer for how the band decomposition maps to hardware texture levels.

Also in this release

See the full release notes

for details on all changes, including 15 open-source experimental stimuli,

arxiv paper updates (Walton 2021, WebGPU tiered roadmap, mongrel Tier 2.5 spec),

TEST_LOAD_TIMEOUT for heavy pages, and appendix baseline captures.

References cited: Bouma 1970 · Bowers, Gegenfurtner & Goettker 2025 · Halverson & Hornof 2011 · Hansen et al. 2009 · Hershler & Hochstein 2005 · Itti & Koch 2001 · Mullen & Kingdom 2002 · Rovamo & Virsu 1979 · Pelli & Tillman 2008 · Toet & Levi 1992 — Annotated Bibliography · Primer References