2026-02-28

Scrutinizer v1.6 & v1.7: Abstracting and Reading the Latest Science

v1.6.0 · v1.7.0 release notes →Two releases in February. v1.6 broke apart a growing monolith and shipped biologically-grounded peripheral rendering (DoG band decomposition with M-scaling from measured cortical data). v1.7 came from reading a new paper, extracting one equation into a switchable pipeline mode in an afternoon, and discovering a shared theoretical ambiguity worth resolving.

What shipped

v1.6 — De-Monolith + DoG Peripheral Reconstruction

- Refactored a growing codebase away from the antipattern of monolithic code blocks

(969 lines, one file doing everything). Wins for conceptual separation between

oculomotor simulation (

gaze-model.js), visuospatial working memory (visual-memory.js), and pre-cortical feature extraction (content-analysis.js) — three concerns that share data but not logic. - New Difference-of-Gaussians peripheral rendering — decomposes hardware MIP chain into 4 approximate Laplacian pyramid bands (box/bilinear filtered, not true Gaussian) with M-scaling rolloff derived from Rovamo & Virsu (1979) cortical magnification data. Preserves low-frequency structure (layout, buttons) while filtering high-frequency detail (serifs, fine textures).

- 138 unit tests for the pure-function modules.

v1.7 — FOVI Cortical Magnification + Experimental Modes

- Mode 6: FOVI (Cortical Magnification) — implements FOVI's cortical magnification function from Blauch, Alvarez & Konkle (2026) as a switchable MIP-sampling mode. This is the spatial resolution falloff only — we don't implement FOVI's kNN sensor manifold, kernel mapping, or neural network pipeline. Gaussian color decay is Scrutinizer's own addition for comparison.

- Mode 7: Legacy v1.6 — frozen snapshot for A/B comparison.

- Mode 8: Gaussian Desaturation — isolates one variable: exponential color decay ($e^{-r/\sigma}$) inside Scrutinizer's existing Oklab pipeline. Everything else identical to Mode 0. Single variable changed.

The architecture is designed for this — modes.json config, per-mode uniform injection,

hot-switchable pipelines. Want to contribute a mode?

See CONTRIBUTING.md

and the project backlog for grad students.

From hand-tuned cutoffs to FOVI's CMF

Cortical magnification — spatial resolution falling off with eccentricity — has been the design target since v1.6. Our DoG band cutoffs were derived from Rovamo & Virsu (1979) M-scaling data, hand-tuned to GPU MIP semantics. They worked, but the constants were empirical rather than analytical.

While preparing a system paper for arXiv, we surveyed recent foveated vision literature. On February 3rd, Blauch, Alvarez & Konkle published FOVI: a kNN-convolutional architecture that reformats a variable-density retinal sensor array into a V1-like manifold for deep vision models. It's a substantial piece of work — novel kernel mapping, LoRA-adapted DINOv3, the whole pipeline. What caught our attention was their cortical magnification function — the analytically grounded form of the curve we'd been approximating.

FOVI gave us the clean analytical form: resolution drops off logarithmically from the center of gaze. The further from fixation, the less cortical surface area is dedicated to processing that region — and resolution falls off accordingly.

We adapted their magnification curve to Scrutinizer’s GPU pipeline. FOVI uses the curve to build a variable-density sensor for neural networks; we use it to control how much detail the GPU preserves at each distance from fixation. Same biological curve, different application. (Thanks to Nicholas Blauch for catching an original attribution error.)

We implemented our MIP adaptation of the CMF as a switchable

textureLod() sampling mode

and dropped it into Scrutinizer as Mode 6. (We did not implement FOVI's kNN manifold,

kernel mapping, or neural network pipeline — just the spatial resolution curve.)

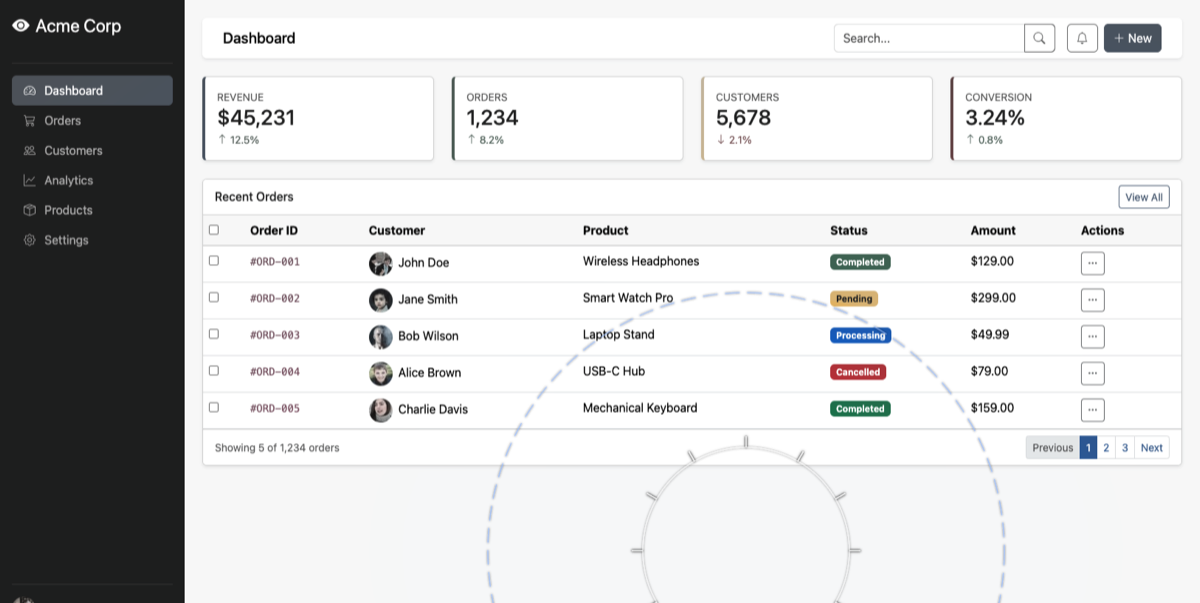

Side by side, our CMF-derived cutoffs preserve detail 3–4× further

into the periphery:

| Band | CMF-adapted cutoff | v1.6 cutoff | Ratio |

|---|---|---|---|

| Serifs (c0) | 0.58 | 0.15 | 3.8× |

| Letters (c1) | 1.39 | 0.30 | 4.6× |

| Words (c2) | 2.54 | 0.60 | 4.2× |

| Buttons (c3) | 4.17 | 1.20 | 3.5× |

That gap is crowding, RF growth, and contrast sensitivity — factors FOVI leaves to the downstream network, factors Scrutinizer bakes into the shader.

Update (March 2026): These captures were re-generated after we ran

a full-spectrum color diagnostic through the pipeline. The original comparison

revealed that salient regions were capping desaturation at 85% — an aesthetic

guard that left visible color in the far periphery. Investigating why led us to

expose the cap as a tunable parameter (u_desat_floor) and default it

to 1.0 (full desaturation). The images above now reflect the corrected pipeline.

The color question: why blue survives

FOVI doesn't address color — it's a spatial resolution transform. Scrutinizer has to pick a transfer function for peripheral desaturation, and the results are strikingly different. Run a color wheel through both pipelines and it's immediately visible: Scrutinizer preserves blue-green tints deep into the periphery while red and orange vanish. FOVI strips all color uniformly.

This isn't the saliency gate — it happens on pure image content with no DOM

structure to preserve. The mechanism is the eigengrau tint in Scrutinizer's Oklab

desaturation. Peripheral pixels don't fade to neutral gray; they fade toward eigengrau

(L=0.1, a=0, b=-0.05), a dark blue-gray that models the intrinsic color

of rod-dominated dark adaptation. That b=-0.05 on the blue-yellow axis

means the destination itself has a blue bias. Red gets pulled away from its warm

coordinates and pushed cool. Blue gets reinforced. The result: blue and

green survive in the periphery while red goes first.

The empirical motivation for this is strong. Mullen & Kingdom (2002) measured red-green vs. blue-yellow cone opponency across eccentricity with independently scaled stimuli — controlling for the confound that the channels have different spatial tuning. They found red-green (L-M) sensitivity is a foveal specialization that collapses in the periphery, while blue-yellow (S-(L+M)) declines gradually, tracking achromatic sensitivity.

A 2025 far-periphery study put numbers on it:

| Channel | At 15° | At 75° | 50% eccentricity |

|---|---|---|---|

| Red-green (L-M) | 29% | 4% | ~8–10° |

| Blue-yellow (S-(L+M)) | 79% | 18% | ~40–50° |

| Achromatic (L+M) | 76% | 12% | ~35–45° |

At 15 degrees, red-green has lost 71% of its foveal sensitivity while blue-yellow has lost only 21%. The 50% crossover eccentricities differ by a factor of 4–5×.

The reason is in the wiring. The blue-yellow pathway has dedicated retinal wiring that covers the entire retina — it’s evolutionarily ancient. Red-green sensitivity, by contrast, depends on fine-grained one-to-one connections that only exist near the fovea. In the periphery, those connections pool together and the red-green signal collapses. Red-green is a foveal specialization. Blue-yellow is retina-wide.

Scrutinizer’s desaturation is correct in direction: peripheral pixels fade toward a blue-shifted dark gray (the Purkinje effect), so blue persists and red goes first. But both color channels are reduced by the same amount — the asymmetry comes from the destination color, not from channel-specific decay rates. v1.9 fixes this with per-channel decay.

A shared open question

Both FOVI and Scrutinizer model peripheral color loss, but neither captures the full biology. FOVI strips all color uniformly. Scrutinizer fades toward a blue-shifted dark gray (eigengrau), which gives the right qualitative asymmetry — blue persists, red goes first — but through the wrong mechanism.

The correct approach is separate decay rates for red-green (fast, foveal specialization) and blue-yellow (slow, retina-wide). v1.9 shipped this fix — see the chromatic pipeline post. We’ve also opened a discussion with the FOVI team about per-channel decay.

Making science go fast

Paper published February 3rd. CMF equation extracted and shipping as a switchable

mode by February 28th. The modular architecture made this trivial — one new

uniform, one branch in the MIP sampling function, one entry in

modes.json. Reading a paper and absorbing the relevant piece into

your system should be the same afternoon. That's what branch points are for.

Blauch's larger program

FOVI is one piece. Blauch's arc — from topographic organization in ventral temporal cortex, through foveated vision, to Topoformer (topographic self-attention in language transformers) — builds the case that cortical spatial organization is a general computational principle. The retina and cortex aren't just biology to model — they're engineering solutions to port. The color decay question and the magnification gap matter to both pipelines.

Links: FOVI paper (Blauch et al.) · GitHub · Full changelog